AI coaching and therapy platforms face a key challenge: how to provide personalized support while protecting user privacy. Personalization relies on analyzing sensitive data – like mood, stress, and behavior patterns – to deliver tailored advice. But without strong privacy protections, users may hesitate to share that information, limiting the platform’s effectiveness.

Key takeaways:

- Personalization: Platforms like Aidx.ai use techniques like CBT and stress tracking to help users achieve 78% higher goal success rates.

- Privacy: Aidx.ai employs encryption, data separation, and user-controlled deletion to safeguard data, unlike many generic platforms that may share or retain user information.

- Trust: Without trust, users are less likely to open up, reducing the impact of coaching.

For meaningful progress, choose platforms prioritizing both personalization and rigorous privacy safeguards.

1. Aidx.ai

Data Usage

Aidx.ai leverages conversation data to provide personalized coaching experiences. By tracking energy levels, mood, and stress patterns over time, it can identify early warning signs of burnout or potential crises before they escalate [2]. Coaching sessions are informed by real-time data from users’ Roadmaps, helping to transform intentions into actionable steps. This approach has been associated with notable progress in achieving personal goals [2].

The platform continuously learns from user interactions. It identifies behavioral trends and tests various coaching methods to determine what resonates best. Hali Holeszowski, a mobility coach and founder, described the AI as "remarkably intuitive", noting its ability to pinpoint issues and ask targeted questions more efficiently than traditional methods [2]. Over time, this adaptive learning allows the platform to fine-tune its guidance, aligning with each user’s thought processes and needs.

Adaptation to Users

Aidx.ai’s evolving understanding of its users forms the basis of its personalized coaching style. It incorporates evidence-based therapeutic techniques such as Cognitive Behavioral Therapy (CBT), Dialectical Behavior Therapy (DBT), and Acceptance and Commitment Therapy (ACT) [2]. The platform adjusts its conversational tone and strategies based on the user’s unique mindset and current challenges.

"Aidx doesn’t know you on day one. It learns your patterns, tests what works, adjusts as you go. Give it time. The more you use it, the sharper it gets." [2]

This ability to adapt ensures the AI can deliver targeted advice and practical solutions. Vera Martins, a psychologist and business owner, praised the platform’s capacity to handle complex situations by offering actionable insights and tips [2].

Privacy Safeguards

Privacy is a cornerstone of Aidx.ai. User data is encrypted during storage and transfer, and the platform employs a distinct security measure by separating encrypted data storage from decryption processes, reducing potential risks [1]. A strict no-human policy ensures that no staff member ever views user conversations or personal data [1][2]. Users maintain complete control over their information, with the option to delete all records through web chat settings or email requests [1]. Features like "Incognito mode", which leaves no trace, and a "Lock screen" to block unauthorized access, further enhance privacy [2].

"Your data is sacred: We will never sell or share your information." – Natalia Komis (CEO) & Nicklas Wolff (CTO), Aidx.ai [1]

This strong commitment to privacy reflects the company’s core values. CTO Nicklas Wolff’s expertise in IT security ensures that data protection remains a top priority. The platform treats conversations with the utmost confidentiality and refrains from monetizing user data, fostering trust – a key element for effective coaching.

sbb-itb-d5e73b4

2. Generic AI Coaching Platforms

Data Usage

AI coaching platforms rely on various data sources, including chat interactions, written goals, and behavioral patterns like login frequency, location, and device information. This data helps create detailed user profiles and is often processed using psychological models such as CBT (Cognitive Behavioral Therapy), DBT (Dialectical Behavior Therapy), and ACT (Acceptance and Commitment Therapy) to deliver customized feedback [4][2].

However, many platforms go beyond the intended use of this data. Instead of limiting it to personalizing user experiences, they often employ it for broader purposes like model training and research [4]. While frameworks like SMART on FHIR emphasize collecting data for specific purposes, generic platforms frequently extend its use beyond the agreed scope, potentially diluting the personalization they aim to deliver. This broad approach to data collection can influence how these platforms refine their coaching methods over time.

Adaptation to Users

Generic platforms are designed to learn and improve by analyzing user behavior. Over time, they refine their coaching strategies, transforming vague goals into actionable plans and helping users track progress in a structured way [2].

But this adaptability comes at a cost. As platforms learn more about a user, they inevitably collect and store more data. Some systems retain this information in logs, backups, or training datasets, even after users request deletion [4]. This means that as these platforms evolve to offer better personalization, they must also address the increasing challenges around data privacy and storage.

Privacy Safeguards

Most platforms implement basic privacy protections, such as encrypted data storage, GDPR compliance, and options for user-controlled data deletion [1][2]. Features like incognito modes and lock screens are also common. However, generic platforms often fall short when it comes to offering detailed consent options for how user data is used [4].

The main issue lies in consent quality. Industry best practices recommend giving users granular control, such as the ability to opt out of model training while still accessing core features. Yet, many platforms bundle data usage into broad terms of service, leaving users with limited choices [4]. Red flags to watch for include platforms that make opting out difficult, fail to provide clear crisis escalation paths, or don’t transparently explain how they use data beyond immediate coaching sessions [4]. This tension between personalization and potential overreach underscores the privacy challenges inherent in AI coaching platforms.

Is Your AI Coach Dangerous? | The Truth About ChatGPT Training Plans & Your Privacy | SciTech Brief

Strengths and Weaknesses

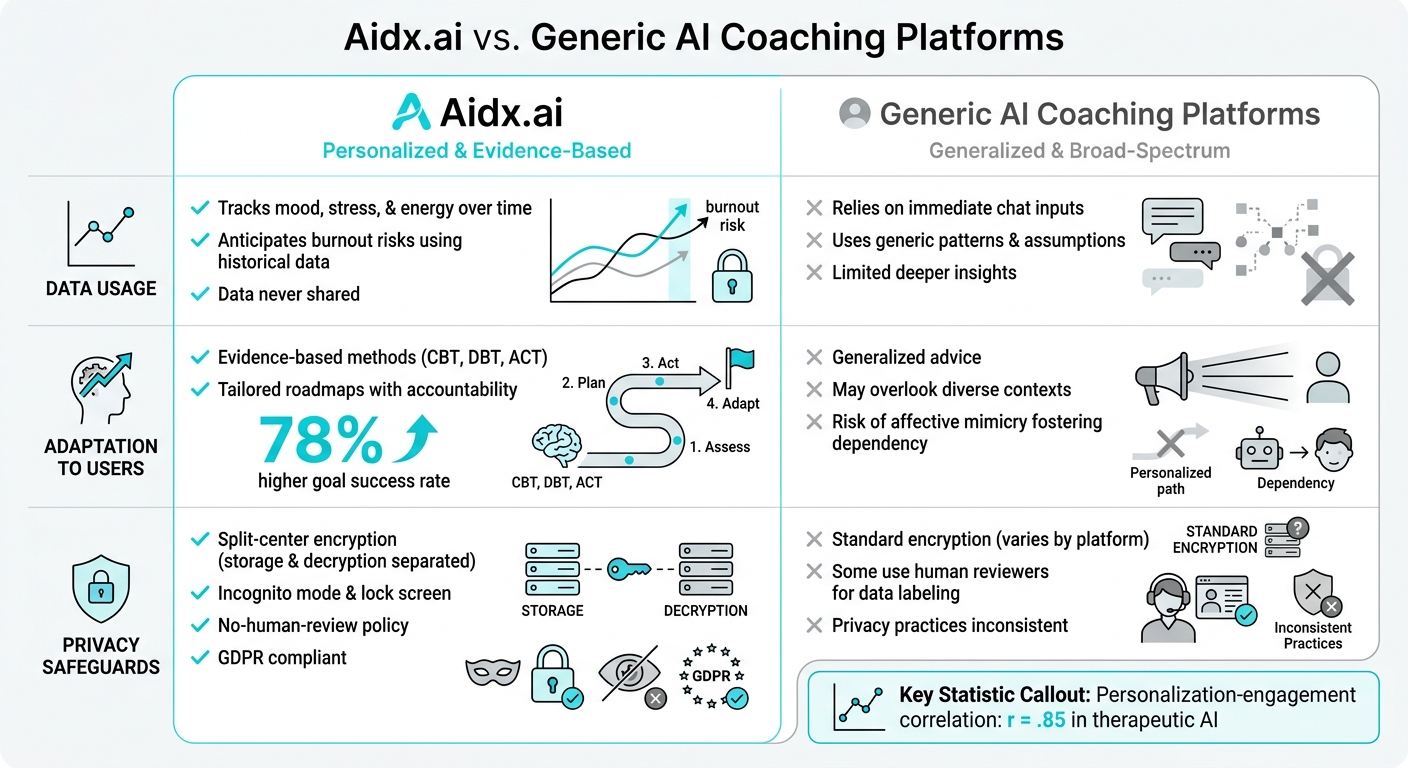

Aidx.ai vs Generic AI Coaching Platforms: Privacy and Personalization Comparison

This section dives into the trade-offs involved in AI coaching platforms, focusing on how data is utilized, who controls it, and whether personalization compromises safety. Every approach has its pros and cons, and understanding these nuances is key.

| Criterion | Aidx.ai Approach | Generic AI Coaching Platforms |

|---|---|---|

| Data Usage | Tracks mood, stress, and energy over time, using historical data to anticipate burnout risks [2]. Data is strictly not shared [1]. | Relies mainly on immediate chat inputs, using generic patterns and assumptions instead of deeper insights [5]. |

| Adaptation to Users | Implements evidence-based methods like CBT, DBT, and ACT, requiring a learning period but achieving 78% higher goal success through tailored roadmaps and accountability [2]. | Offers generalized advice that can overlook diverse contexts [5]. May use affective mimicry to boost engagement, potentially fostering dependency rather than growth [3]. |

| Privacy Safeguards | Employs split-center encryption, ensuring data storage and decryption occur separately. Features include Incognito mode, lock screen, and GDPR compliance [2][1]. | Standard encryption is common, but privacy practices vary. Some platforms use human reviewers for data labeling and may lack strict "no-human-review" policies [5]. |

The table highlights a central challenge: balancing personalization with privacy. Research shows a strong link (r = .85) between personalization and user engagement in therapeutic AI [3]. However, hyper-personalized systems that mimic emotional intimacy risk creating dependency, while overly generic systems fail to engage users effectively.

Aidx.ai tackles this with what psychotherapist Adewale Ademuyiwa calls "cultural humility." Instead of assuming context, the system asks thoughtful questions like, "Tell me more about your workplace culture," before offering advice [5]. Boyoung Kang from Sungkyunkwan University adds:

"Engagement and safety may not necessarily be mutually exclusive: when grounded in boundary-aware design, therapeutic AI systems can support ethically aligned personalization while reducing risks related to dependency" [3].

Privacy is another cornerstone of trust in AI coaching. Aidx.ai’s founders, Natalia Komis and Nicklas Wolff, emphasize:

"Your trust is our bread & butter, since you can’t have good coaching or therapy results without it" [1].

Trust is critical – without it, users are less likely to open up, and progress remains shallow. Aidx.ai’s split-data center model reduces breach risks, even if one part of the system is compromised [1].

The trade-off? Patience. Aidx.ai requires consistent user engagement to build a detailed understanding. But this same depth allows it to identify stress and burnout risks early – something platforms without historical data simply can’t achieve [2][5].

Conclusion

Personalization and privacy don’t have to be at odds. Aidx.ai shows how a strong privacy framework can support effective personalization. By using a split-data center model – where encrypted data is stored and decrypted in separate locations – the platform minimizes risks, even if one system is compromised [1]. On top of that, it tracks mood, stress, and energy patterns over time, helping to identify signs of burnout before they become serious, all while relying on historical data for accuracy [2].

This setup creates a unique trade-off for users: patience for precision. Aidx.ai requires consistent interaction to learn individual patterns deeply, which leads to better long-term results. In contrast, quick, generic responses may feel convenient but often lack the depth needed for meaningful progress [2].

When selecting an AI coaching platform, consider what you’re sharing. If you’re dealing with sensitive topics – whether personal or professional – make sure the platform offers strong privacy measures [1]. For those aiming for real progress rather than surface-level advice, focus on platforms that use proven methods like CBT, DBT, and ACT, paired with tools such as progress tracking and accountability features [2].

As Aidx.ai’s founders explain:

"Your trust is our bread & butter, since you can’t have good coaching or therapy results without it" [1].

Trust is the foundation of effective coaching. Without it, users may hold back crucial details, limiting their growth. In the end, trust and privacy are not just add-ons – they are essential for both personal development and data security.

FAQs

How does Aidx.ai personalize coaching without exposing my private data?

Aidx.ai tailors coaching to your needs by analyzing your patterns over time, all while keeping your privacy a top priority. Your data is fully encrypted, never shared or sold, and remains inaccessible to humans. Plus, you’re in control – delete your data whenever you want to ensure total confidentiality.

Can I use Aidx.ai without saving any conversation history?

Aidx.ai offers the option to use the platform without saving your conversation history. All data is encrypted both during storage and transfer, ensuring your privacy is protected. No one, including humans, can access your information. Plus, you have the ability to delete your data whenever you choose. Protecting your privacy is a key focus of Aidx.ai, giving you complete control over your information.

If I delete my data in Aidx.ai, is it fully removed everywhere?

Yes, when you delete your data in Aidx.ai, it is completely removed from the platform. All conversations are encrypted, never viewed by humans, and you have full control to erase everything at any time, ensuring your privacy and confidentiality are protected.