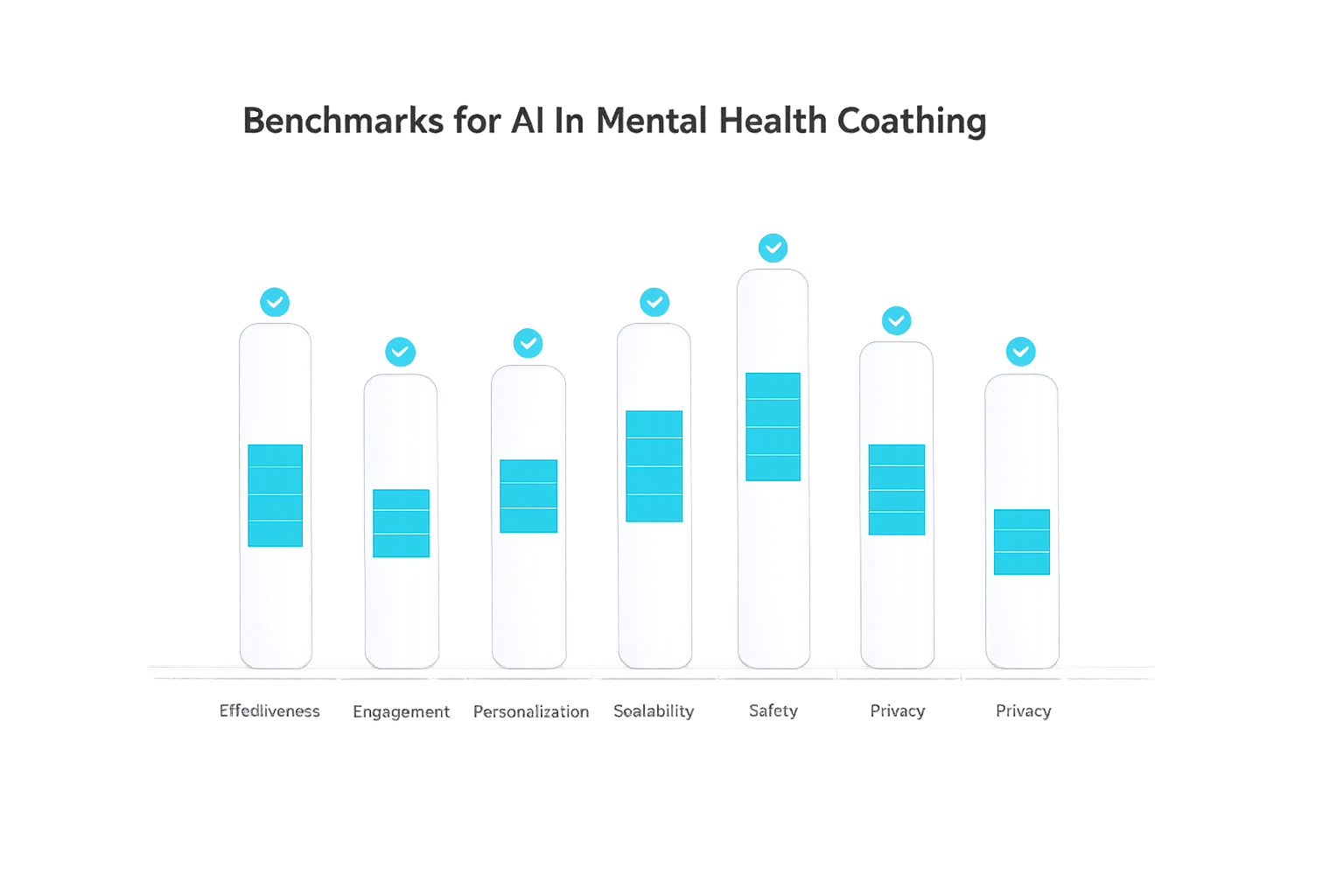

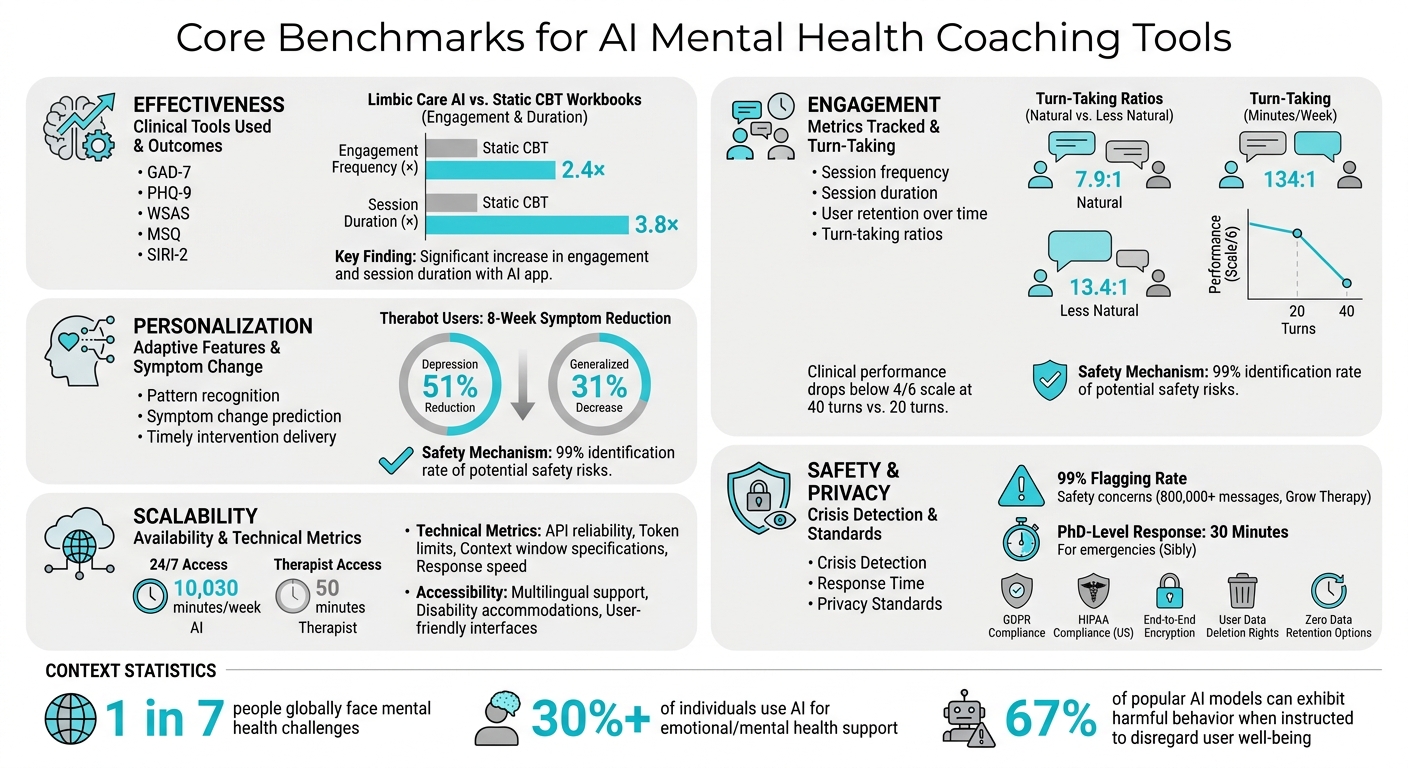

AI tools for mental health coaching are becoming more popular, but ensuring their safety, effectiveness, and reliability is critical. Benchmarks act as quality checks, helping evaluate how well these tools perform in areas like symptom reduction, engagement, personalization, and privacy. Here’s a quick summary of what these benchmarks cover:

- Effectiveness: Measured through clinical tools like GAD-7 (anxiety) and PHQ-9 (depression). AI tools are assessed for their ability to reduce symptoms and improve well-being.

- Engagement: Tracks user interactions, such as session frequency and duration, to ensure meaningful participation.

- Personalization: Evaluates how well AI adapts to user behavior and provides tailored interventions.

- Scalability: Focuses on response speed, 24/7 availability, and accessibility for diverse users.

- Safety and Privacy: Ensures crisis detection, human oversight, and strict data protection protocols.

With 1 in 7 people facing mental health challenges globally and over 30% using AI for support, these benchmarks are essential for accountability and trust. Tools like Aidx.ai and frameworks like PsychEval and MindBenchAI are leading efforts to set high standards in this space.

Core Benchmarks for AI Mental Health Coaching Tools: Effectiveness, Engagement, Personalization, Scalability, and Safety

PSI-Bench: Revolutionizing Depression Patient Simulator Evaluation with AI

Core Metrics for AI Performance in Mental Health Coaching

Evaluating AI performance in mental health coaching goes beyond simple interactions. The process focuses on three main areas: effectiveness, engagement, and personalization. These benchmarks aim to determine how well an AI tool supports mental health improvements. Let’s take a closer look at how these metrics work together to measure the impact of AI in this field.

Effectiveness: Symptom Reduction and Behavior Change

Effectiveness is measured using validated clinical tools like the Generalized Anxiety Disorder 7-item scale (GAD-7) for anxiety, the Patient Health Questionnaire (PHQ-9) for depression, the Work and Social Adjustment Scale (WSAS) for functional issues, and the Mini Sleep Questionnaire (MSQ) for sleep problems [7]. For example, a randomized trial conducted by Limbic from June to July 2024 (NCT06459128) compared their "Limbic Care" AI app to static digital CBT workbooks. Over six weeks, the AI group saw a 2.4× increase in engagement frequency and a 3.8× increase in session duration. Users engaging with AI-guided sessions experienced greater anxiety relief and improved well-being [7].

New benchmarks also assess clinical reasoning through expert-rated responses and tools like the Suicide Intervention Response Inventory 2 (SIRI-2), which assigns numeric ratings to AI responses based on their appropriateness [6][3]. Platforms such as MindBenchAI, launched in September 2025 by a team including Harvard and NAMI researchers, evaluate these reasoning skills. They link outcomes to real-world clinical accountability and crisis management.

As Bridget Dwyer and colleagues explain:

MindBenchAI is designed to provide easily accessible/interpretable information for diverse stakeholders… assessing both profile (i.e., technical features, privacy protections, and conversational style) and performance characteristics (i.e., clinical reasoning skills).

- Bridget Dwyer et al. [6]

These measures ensure AI tools meet the rigorous standards required for safe and effective mental health support.

Engagement: Retention and Session Data

Engagement metrics track how consistently users interact with an AI tool, which is crucial since higher engagement with therapeutic tasks is strongly linked to better outcomes [7]. Key metrics include session frequency, duration, and user retention over time.

Turn-taking ratios between AI and user messages also play a role in perceived naturalness. For instance, lower ratios (7.9:1) are associated with more natural conversations compared to higher ratios (13.4:1) [2]. In one study, a multi-agent AI system generated 459 messages averaging 230 characters, while a single-agent system produced 336 messages averaging 409 characters [2].

However, engagement alone isn’t enough – it must align with clinical quality. Studies show that large language models can experience performance declines in longer interactions. For example, a 2026 study revealed that clinical performance for 12 advanced models (like GPT-5 and Claude 4.5) dropped below 4 on a 1–6 scale during therapy sessions with 40 turns, compared to 20 turns [5].

Platforms like Aidx.ai address this by combining engagement tracking with structured progress monitoring, such as visual roadmaps and weekly accountability reports. This approach ensures that high engagement translates into meaningful progress toward user-defined goals, confirming the importance of sustained interaction in mental health interventions.

Personalization: Adaptive Learning and Progress Monitoring

Personalization focuses on whether AI tools adapt to user behavior. This involves recognizing patterns, predicting symptom changes, and delivering timely interventions. While engagement reflects user commitment, personalization ensures that interventions are tailored to meet evolving needs.

For instance, in early 2025, Nicholas Jacobson and his team at Dartmouth College introduced Therabot, a generative AI chatbot that tracks symptom changes and provides timely digital interventions. An eight-week clinical trial showed users experienced a 51% reduction in depression symptoms and a 31% decrease in generalized anxiety [9].

As Jacobson notes:

If you can monitor and predict ebbs and flows in symptoms, then you can deliver digital interventions at the right time. – Nicholas Jacobson [9]

In April 2026, Stanford researchers developed Bloom, an app featuring Beebo, an AI agent that uses "dialog state prompt chains" to adapt its responses. Beebo tailored workout plans based on user feedback, helping users develop healthier mindsets around exercise – even if their activity levels didn’t significantly increase [8].

Another example includes an AI coach that processed over 800,000 messages, with 20% focused on relationship issues. The system used adaptive learning to help users practice skills between sessions and included a safety mechanism that identified 99% of potential safety risks during quality reviews [10].

Platforms like Aidx.ai incorporate these personalization principles by recognizing patterns and helping users understand how their behaviors, like social withdrawal, influence symptoms such as anxiety. This approach encourages users to recognize their capabilities and make informed choices, ensuring personalization contributes to effective and accountable mental health support.

Scalability and Access Benchmarks

Scalability benchmarks evaluate whether AI mental health tools can effectively serve large and diverse populations while maintaining quality. This includes assessing response speed, availability, and accessibility features to ensure support is timely and inclusive, regardless of factors like time zone, language, or technical skills.

Response Speed and 24/7 Availability

Fast response times and constant availability are essential for AI mental health tools. As Henry Ly, Co-Founder & CTO of Adamo Software, points out:

A therapist sees you for 50 minutes a week. An app is available for the other 10,030 minutes. [12]

This stark contrast underscores the importance of instant responses. Platforms like Mindbench.ai focus on technical stability by evaluating factors such as API reliability, token limits, and context window specifications [4]. Meanwhile, tools like Cleanlab‘s Trustworthy Language Model (TLM) provide real-time trust scores to minimize errors and flag situations that may require human intervention [11].

However, availability alone doesn’t cut it – performance must remain steady over extended interactions. Ricardo Rei, Head of AI Research at Sword Health, emphasizes:

We can only improve what we can measure so we studied how to measure them. [5]

To address this, the PsychEval benchmark, introduced in January 2026, measures how well AI tools maintain memory and reasoning across 6–10 sessions, which is crucial for long-term coaching and support [1]. For example, Aidx.ai meets these standards by offering seamless 24/7 access through any browser or device, ensuring consistent support without performance issues. These advancements pave the way for equally rigorous standards in accessibility and privacy.

Accessibility and Privacy Standards

For AI tools to scale effectively, they must be both accessible and secure. Accessibility benchmarks ensure tools cater to diverse users, including those with disabilities, limited tech access, or language barriers. Many systems now include multilingual support, such as HamRaz’s Farsi-language functionality and specialized SAT chatbots designed for culturally specific therapy [2].

User-friendly designs also play a big role. Multi-agent conversational frameworks, which mimic natural human dialogue with frequent turn-taking and shorter messages, score higher in perceived usability (3.9/5) compared to single-agent systems (3.0/5) [2]. This approach helps users who might struggle with rigid, rule-based interfaces.

Privacy is another critical factor. Tools must meet regulations like GDPR in the EU and HIPAA in the US to protect personal and health data [13]. MindBench.ai, launched in collaboration with NAMI in February 2026, provides transparent technical details on data retention, encryption, and memory management [3]. As the MindBench.ai team explains:

The regulation and safety assessment of LLMs and LLM-based tools is not simple as these models can respond to a near-infinite variety of prompts and produce a near-infinite variety of outputs. [3]

Aidx.ai adopts a privacy-first approach by encrypting conversations, ensuring full GDPR compliance, and allowing users to delete their data. These measures not only protect user information but also build trust, ensuring scalable access without compromising safety.

sbb-itb-d5e73b4

Safety and Ethics Standards for AI Mental Health Tools

When AI tools are designed to engage in mental health conversations, ensuring safety becomes a top priority. Unlike basic mood trackers, systems powered by large language models (LLMs) can generate unpredictable responses, making it essential to implement multiple layers of protection.

Human Oversight and Crisis Escalation

AI mental health tools need to recognize their limitations, especially in high-risk situations. Crisis detection systems use machine learning classifiers to analyze user messages for signs of self-harm, substance abuse, or severe distress. When such triggers are identified, the AI must pause its responses and immediately connect users with human crisis support.

In April 2026, Grow Therapy introduced an AI-powered "Coach" feature with a robust safety framework. This system includes automated quality scoring and regular reviews conducted by licensed clinicians. If a safety concern arises, the AI halts its interaction and provides crisis resources. By April 2026, this setup had successfully flagged 99% of potential safety concerns across more than 800,000 messages [10]. Matt Scult, Ph.D., Principal of Clinical AI at Grow Therapy, emphasized their meticulous approach:

We wanted to make sure we put safety first and weren’t just relying on one layer of protection. So we built multiple independent safety layers, automated quality scoring of every conversation, and ongoing review by a team of licensed clinicians. [10]

Sibly, Inc. takes a similar approach, incorporating human expertise for high-risk cases. Their AI-driven text-based coaching service uses machine learning to identify 40 specific topics and detect shifts in sentiment. In emergencies involving danger to self or others, PhD-level experts respond within 30 minutes [15]. To evaluate these systems, standardized tools like the Suicide Intervention Response Inventory 2 (SIRI-2) help assess how well AI responses align with clinical best practices [4].

While effective crisis escalation is vital, protecting user data is equally important.

Data Privacy and Security Measures

In addition to safety protocols, rigorous data privacy measures are essential. MindBench.ai, launched in February 2026 in collaboration with the National Alliance on Mental Illness (NAMI), evaluates AI tools using 105 general criteria and 59 LLM-specific characteristics. These include data retention policies, encryption standards, memory management for conversations, and user authentication methods [4][3].

Compliance with GDPR is a baseline requirement for mental health AI tools. Since GDPR classifies speech data as personal data, meeting these standards involves encryption, clear privacy policies, and giving users control over data deletion [13][14]. The highest level of compliance mandates zero data retention, requiring session recordings and raw data to be deleted immediately after processing [14].

Aidx.ai exemplifies these practices by adhering to strict GDPR standards. The platform encrypts all data and allows users to delete their information. For particularly sensitive topics, its Incognito Mode ensures there is no trace of the interaction, even for users sharing devices with family members.

Advanced privacy measures like Differential Privacy and Federated Learning are also critical to prevent data leaks [13]. For voice-based tools, metrics like Equal Error Rate (EER) measure how well systems anonymize voices while retaining diagnostic markers [13]. As the MindBench.ai team points out:

An LLM that offers superior mental health support but owns a user’s personal health information presents an individual choice that users can only make if profiling information is accessible. [3]

The stakes are high: research indicates that 67% of popular AI models can exhibit harmful behavior when instructed to disregard user well-being [16]. By combining stringent safety protocols with robust privacy measures, AI tools can uphold accountability and contribute to ethical mental health support systems.

New Developments in AI Benchmarking for Mental Health

Measuring LLM Performance in Mental Health Contexts

AI tools in mental health are now being evaluated for how well they align with psychotherapeutic principles. In April 2026, Abdullah Mazhar and his team introduced FAITH-M, a benchmark designed to measure AI responses against six key principles: non-judgmental acceptance, warmth, respect for autonomy, active listening, reflective understanding, and situational appropriateness [17]. Using the CARE framework, their model achieved an F-1 score of 63.34 – an impressive 64.26% improvement over the Qwen3 baseline [17]. The research team highlighted the importance of this work:

The increasing use of large language models in mental health applications calls for principled evaluation frameworks that assess alignment with psychotherapeutic best practices beyond surface-level fluency. [17]

Another area of focus is long-term mental health support. In January 2026, Qianjun Pan and a team of researchers introduced PsychEval, a benchmark designed to assess AI counselors over multiple sessions – ranging from 6 to 10 – rather than isolated interactions. This framework includes an extensive dataset with over 600 meta-skills and 4,500 atomic skills, covering five therapeutic approaches such as Cognitive Behavioral Therapy (CBT) and Psychodynamic Therapy [1]. PsychEval also uses more than 2,000 diverse client profiles to evaluate how well AI systems maintain memory continuity, adapt their reasoning, and dynamically track goals over time [1]. As the researchers put it:

Realistic counseling is a longitudinal task requiring sustained memory and dynamic goal tracking. We propose a multi-session benchmark… that demands critical capabilities such as memory continuity, adaptive reasoning, and longitudinal planning. [1]

One practical example of these advancements is Aidx.ai, a platform that combines evidence-based therapeutic methods with structured goal tracking and memory continuity, ensuring consistent and tailored support. These efforts are paving the way for more robust accountability measures in mental health AI.

Future Directions for AI Accountability Metrics

As evaluation methods for large language models (LLMs) improve, new benchmarks are focusing on accountability and transparency. In September 2025, researchers like John Torous and Bridget Dwyer collaborated with the National Alliance on Mental Illness (NAMI) to create MindBenchAI, an online platform for evaluating mental health LLMs. This tool assesses both the "profile" (privacy protections, technical features, conversational style) and "performance" (clinical reasoning skills) of these systems [6]. It incorporates 48 questions from the MINDapps.org framework, along with 59 new criteria tailored to LLMs, offering clear, objective standards for patients, clinicians, and regulators [3].

A growing trend involves "living" benchmarks that evolve alongside AI models rather than providing static evaluations [3]. Researchers are also exploring adversarial testing methods to challenge AI systems with realistic scenarios, such as information gaps, bias traps, and distractor data, ensuring their clinical reasoning remains sound under pressure [3]. With over 30% of individuals already turning to LLMs for emotional support and mental health challenges affecting 1 in 7 people globally [3], these initiatives are crucial for ensuring AI tools meet rigorous ethical and clinical standards before they are widely adopted.

Conclusion

The benchmarks discussed here – from symptom reduction and user engagement to privacy safeguards and crisis management protocols – serve as essential tools for assessing the safety, effectiveness, and reliability of AI mental health platforms. These standards ensure that tools like Aidx.ai are not only capable of natural, conversational interactions but also support memory continuity across sessions, adapt to individual user needs, and safeguard sensitive data with robust encryption and full GDPR compliance. With the growing global demand for mental health support, thorough evaluation is more important than ever.

Recent advancements are pushing these benchmarks even further. Emerging tools like PsychEval and MindBenchAI are moving away from static assessment models, introducing frameworks that evaluate how well an AI reaches its conclusions – not just whether it provides the correct response. This shift is crucial when working with vulnerable populations. As the MindBenchAI research team explains:

This platform establishes a critical foundation for the dynamic, empirical evaluation of LLM-based mental health tools – transforming assessment into a living, continuously evolving resource rather than a static snapshot. [3]

That said, the unpredictable nature of large language models (LLMs) demands ongoing, real-time assessments. The same input can produce varying responses, and new risks – such as emotional over-reliance, unhealthy parasocial bonds, and cognitive reinforcement of problematic patterns – require specialized testing methods still under development. Moreover, as these models evolve, their reasoning and personality traits can shift rapidly, making it essential to update benchmarks frequently to remain relevant.

Platforms like Aidx.ai demonstrate how rigorous standards can enhance both the effectiveness and accountability of AI mental health tools. By integrating evidence-based practices like CBT, DBT, and ACT, along with structured goal tracking and long-term memory features, these platforms set a high bar. As the field continues to advance, collaboration among developers, clinicians, and regulators will be key to refining evaluation methods, addressing regulatory gaps, and ensuring user safety stays at the forefront.

FAQs

How can I tell if an AI mental health coach is actually helping me?

To gauge how well an AI mental health coach works, you can assess it using key benchmarks such as clinical relevance, empathy, safety, and goal achievement. These criteria are commonly used in research and validation studies to measure performance. Additionally, tracking your own progress over time – like noting improvements in your emotional well-being or how effectively you’re meeting your personal goals – can give you a clearer sense of whether the tool is helping you.

What should an AI coach do if I mention self-harm or a crisis?

When an AI coach encounters mentions of self-harm or a crisis, safety must come first. This means following established clinical standards, which may involve notifying human professionals or emergency services if needed. The focus on clinical validation and safety-critical evaluation ensures that responses are both timely and appropriate, prioritizing the well-being of the individual.

How is my data protected when using an AI coaching tool?

Your data is protected through encryption, ensuring it stays secure. Conversations are never sold, shared, or accessed by humans. Plus, you have full control – delete all your data anytime to maintain privacy and meet GDPR compliance.