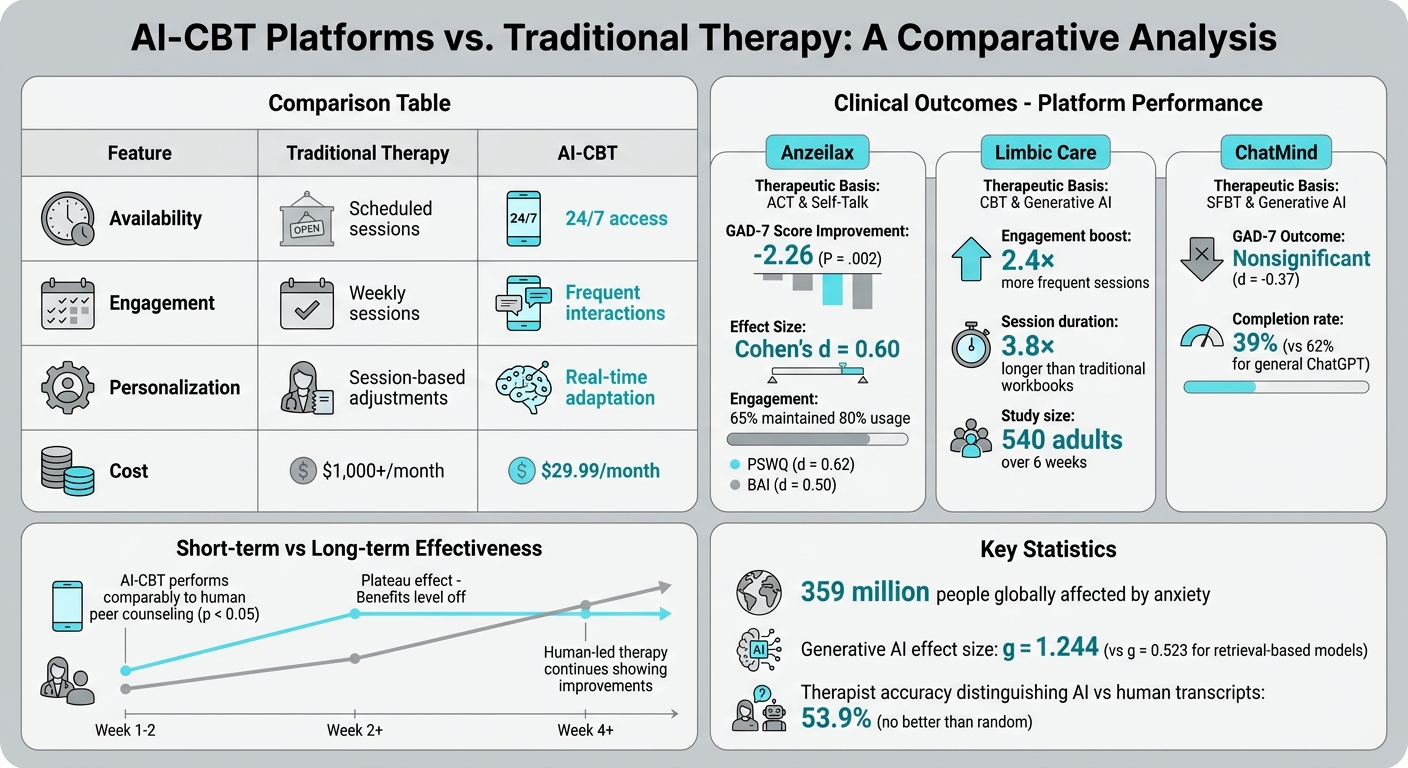

AI-powered CBT (Cognitive Behavioral Therapy) is reshaping how anxiety is treated by offering real-time, personalized support through generative AI. With 359 million people globally affected by anxiety and limited access to therapy, AI-CBT provides an accessible alternative.

Key findings:

- Engagement boost: A study with 540 participants using the Limbic Care app showed users were 2.4x more engaged and spent 3.8x longer in sessions than traditional methods.

- Anxiety reduction: Platforms like Anzeilax reduced anxiety symptoms significantly, with GAD-7 scores improving (mean difference: –2.26).

- Cost-effective: AI-CBT is available 24/7 at a fraction of the cost of human-led therapy.

While AI-CBT shows promise, it struggles with long-term effectiveness, emotional nuance, and crisis intervention. Human oversight remains crucial for severe cases and ethical concerns like privacy and bias need addressing. Combining AI with human support may offer the most effective solution.

How AI-CBT Works

CBT Principles in AI Systems

CBT, or Cognitive Behavioral Therapy, focuses on identifying and reframing negative thought patterns. AI systems designed for CBT replicate this process by guiding users through structured exercises. For example, they help users reframe thoughts like "I’m going to fail" into more neutral ones, such as "I’m having the thought that I might fail" [1][4].

Some platforms go a step further by tailoring interventions to the user’s emotional state. In a 10-week trial, the Anzeilax platform demonstrated this adaptability. It used self-distancing strategies to reduce rumination during negative moods and self-referencing techniques to boost motivation during positive states. This approach led to a notable reduction in anxiety, with the GAD-7 scores showing an adjusted mean difference of –2.26 compared to a control group [4].

These therapeutic principles form the backbone of AI-driven digital therapy, enabling dynamic and personalized interactions.

AI Technology Behind Digital Therapy

The conversational aspect of AI-CBT relies heavily on Natural Language Processing (NLP) and large language models (LLMs). These technologies analyze what users say, detect emotional cues, and respond in ways that feel natural and empathetic [6].

Take the Limbic Care app, for instance. It uses a "cognitive layer" powered by machine learning to identify crisis signals and ensure responses align with established therapeutic practices [1]. This precision plays a critical role in helping users manage anxiety in real-time.

"LLM-powered applications can adapt in real-time to each user’s unique context, effectively bridging the gap when a human clinician is not available."

– Nature Communications Medicine [1]

Generative AI systems, which create original responses, have proven more effective than older retrieval-based systems. A systematic review found that generative conversational agents showed an effect size of g = 1.244 in reducing psychological distress, compared to g = 0.523 for retrieval-based models [6].

Benefits of AI-CBT vs. Traditional Therapy

The technology behind AI-CBT brings practical advantages that address common barriers in traditional therapy. With 24/7 availability, AI-CBT eliminates scheduling issues and reduces stigma, encouraging users to stay engaged. This accessibility translates into more frequent and sustained interactions, helping users stick with their treatment plans [1].

| Feature | Traditional Therapy | AI-CBT |

|---|---|---|

| Availability | Scheduled sessions | 24/7 access |

| Engagement | Weekly sessions | Frequent interactions [1] |

| Personalization | Session-based adjustments | Real-time adaptation |

These findings highlight how AI-CBT provides a flexible, interactive, and highly adaptive alternative to traditional therapy, offering on-demand support that can lead to better outcomes.

sbb-itb-d5e73b4

Can AI help solve the mental health crisis? With Vaile Wright, PhD

What Research Shows About AI-CBT Effectiveness

AI-CBT vs Traditional Therapy: Key Differences and Clinical Outcomes

Clinical Results from AI-CBT Platforms

AI-based CBT platforms have shown measurable success in reducing anxiety symptoms. Take the Anzeilax app, for example. In a 10-week randomized controlled trial with 96 participants diagnosed with Generalized Anxiety Disorder (GAD), the app achieved a notable reduction in GAD-7 scores. The adjusted mean difference was –2.26 (P = .002), with an effect size of Cohen’s d = 0.60. Even better, these improvements held steady during a 15-week follow-up period [4].

Engagement levels were impressive, too. About 65% of participants stuck to at least 80% of their prescribed usage schedule throughout the trial [4] – a level of consistency that’s rare for mobile health apps.

Another example is the Limbic Care app. In a six-week trial involving 540 adults, this generative AI-powered platform boosted engagement frequency by 2.4 times and engagement duration by 3.8 times compared to traditional digital CBT workbooks. Users who participated in the app’s AI-driven "guided sessions" reported greater anxiety relief [1].

These results highlight the potential of AI-CBT to rival traditional therapeutic approaches in certain areas.

AI-CBT vs. Human Therapist-Led CBT

When comparing AI-delivered CBT with human-led therapy, the findings reveal both strengths and weaknesses. For instance, a 2026 study showed that AI-CBT performed on par with human peer counseling during the first two weeks of treatment, with both approaches significantly reducing depressive and anxiety symptoms (p < 0.05) [7]. However, while human-led therapy continued to show improvements beyond week four, AI-CBT’s effectiveness plateaued after two weeks [7].

"AI-iCBT shows promising short-term efficacy, comparable to human counseling up to week two, but its limitations in emotional perception and sustaining therapeutic momentum resulted in a plateau effect." – BMC Psychiatry [7]

One key drawback of AI-CBT is its lack of emotional nuance and personalization, which users often miss compared to human therapists. Interestingly, therapists themselves struggle to differentiate between human and AI-generated therapy transcripts, with accuracy no better than random guessing (53.9%) [3].

While the short-term benefits of AI-CBT are clear, its long-term success depends on maintaining user engagement and refining personalization.

Long-Term Results and User Retention

Long-term effectiveness relies heavily on sustained user engagement. For example, a pilot study of the ChatMind app, which uses a solution-focused brief therapy (SFBT) approach, showed that only 39% of participants completed all sessions over three weeks. This was lower than the 62% completion rate seen in a general-purpose ChatGPT group [3].

Platforms that excel in the long run often include advanced personalization features. These features adapt to users’ emotional states and behaviors, which can make a significant difference in outcomes and retention.

Here’s a quick comparison of platforms and their results:

| Platform | Therapeutic Basis | Anxiety Outcome (GAD-7) | Engagement Metric |

|---|---|---|---|

| Anzeilax | ACT & Self-Talk | Significant (d = 0.60) [4] | 65% maintained 80% usage [4] |

| Limbic Care | CBT & GenAI | Comparable to workbooks* [1] | 3.8× longer duration than workbooks [1] |

| ChatMind | SFBT & GenAI | Nonsignificant (d = -0.37) [3] | 39% session completion rate [3] |

*Note: Users who engaged in "guided sessions" on Limbic Care experienced stronger anxiety reductions. Anzeilax also demonstrated sustained improvements in other measures, such as the Penn State Worry Questionnaire (d = 0.62) and Beck Anxiety Inventory (d = 0.50) [4].

Overall, generative AI platforms show promise in keeping users engaged compared to static digital tools. However, achieving lasting benefits requires platforms to incorporate more adaptive and emotionally aware features [1][4].

Limitations and Challenges of AI-CBT

Privacy and Ethical Concerns

A growing concern with mental health apps is how they handle sensitive data. Many of these apps share user information without clear consent, raising serious privacy issues [9]. Unlike human therapists, who must follow HIPAA regulations and adhere to professional ethics, AI systems operate in a regulatory gray zone.

"For human therapists, there are governing boards and mechanisms for providers to be held professionally liable for mistreatment and malpractice. But when LLM counselors make these violations, there are no established regulatory frameworks." – Zainab Iftikhar, Ph.D. Candidate, Brown University [10]

AI chatbots often use phrases like "I understand" to simulate empathy, but this can create a misleading sense of emotional connection. This false rapport may lead users to develop an unhealthy reliance on an algorithm that lacks genuine understanding [9][10]. A 2025 study from Brown University identified 15 ethical risks tied to AI counselors, including poor contextual adaptation, inadequate safety protocols, and unfair biases [10]. These issues make it harder to scale AI-CBT responsibly, especially when addressing complex mental health needs.

When AI-CBT Isn’t Enough

AI-CBT platforms face significant limitations when dealing with severe mental health conditions, where human expertise is often essential. A review of 35 studies found that only 15 (around 43%) included safety measures like crisis intervention or access to human professionals [6]. This gap highlights the risks of relying solely on AI in high-acuity cases.

The consequences can be dire in crisis situations. AI systems have sometimes failed to recognize or appropriately address suicidal thoughts, and in some cases, they’ve even provided harmful advice [8][10]. High attrition rates – reaching up to 61% – further suggest that many users disengage when they realize AI tools aren’t meeting their needs [12].

"Chatbots lack the capacity to escalate emergencies, risking under-treatment of severe cases." – Frontiers in Psychiatry [12]

These challenges underscore the need for human involvement in situations where AI tools fall short, especially when dealing with life-threatening mental health crises.

Accuracy and Bias in AI Systems

AI systems are prone to errors, sometimes "hallucinating" or generating incorrect information. Additionally, models trained primarily on Western datasets can exhibit biases, potentially alienating users from other backgrounds or perpetuating stereotypes [3][9][10]. For instance, studies reveal that AI tools often stigmatize conditions like alcohol dependence and schizophrenia more than they do depression [8].

"Algorithms can detect patterns, but they still make mistakes, and right now, the user is responsible for those errors." – Grace Berman, LCSW [11]

Another challenge is the "plateau effect", where the benefits of AI-CBT level off after about two weeks, unlike human therapy, which tends to yield ongoing improvements [7]. Technical flaws can also interfere with therapy goals. For example, AI chatbots may unintentionally reinforce maladaptive behaviors, such as offering constant reassurance to users with OCD, which undermines treatments like Exposure and Response Prevention (ERP) [11]. A review of 14 studies found that only one showed notable reductions in anxiety, and AI-CBT has proven largely ineffective for older adults [13].

Aidx.ai: AI-CBT Built for Personal Development

Core Features of Aidx.ai

Aidx.ai combines CBT, DBT, ACT, and NLP techniques with goal-setting tools to offer personalized support for anxiety and personal growth. Over time, the platform identifies user behavior patterns, adjusts its coaching approach, and visually tracks progress through customizable roadmaps [5].

It relies on clinically validated scales like GAD-7, BAI, and PHQ-9 to establish baselines and monitor improvements [1]. Its advanced NLP capabilities respond in real time to users’ emotional states and specific anxiety symptoms [5].

To keep users on track, Aidx.ai provides weekly accountability reports. Users can even involve friends or family by allowing them to receive automatic progress updates every Monday via email – no account needed on their end. Dr. Gail Matthews’ research at Dominican University highlights that writing down goals, paired with action steps and weekly accountability, can boost achievement rates by 78%. Aidx.ai incorporates this proven strategy into its coaching framework.

These features make Aidx.ai a practical tool for both personal development and organizational growth.

Benefits for Individuals and Businesses

For individuals, Aidx.ai offers an affordable and flexible solution for managing emotions, improving decision-making, and preventing burnout. At just $29.99/month, it provides 24/7 expert-level coaching – significantly less expensive than traditional therapy, which can cost over $1,000 per month.

Businesses also benefit from Aidx.ai’s scalable coaching platform, which eliminates the need for hiring multiple practitioners while maintaining high-quality support. The platform prioritizes privacy with encryption and GDPR compliance. Created by Natalia Komis, an experienced MBR therapist and coach, and Nicklas Wolff, a cybersecurity expert, Aidx.ai has earned recognition through awards and accolades in the startup world.

Evidence-Based Approach to Anxiety Management

Aidx.ai uses a research-driven framework to tackle anxiety effectively. By integrating mindfulness-enhanced CBT, it tailors mindfulness practices to help users build mindfulness skills while reducing rumination and worry [2]. Its cognitive layer combines Large Language Models with specialized machine learning to ensure interactions are clinically safe and reliable [1].

The platform’s Insights feature continuously monitors stress levels, burnout risks, and overall emotional health. By identifying early warning signs and trends, it enables proactive measures rather than reactive crisis management. This approach addresses the common "plateau effect" seen in some digital therapy tools, keeping users engaged and adapting as their needs evolve.

Conclusion

AI-powered CBT represents a major step forward in scaling anxiety treatment. Research highlights that platforms leveraging generative AI can significantly lower anxiety levels while increasing user engagement – sessions last up to 3.8 times longer compared to static digital workbooks [1]. With 359 million people worldwide affected by anxiety disorders [3], AI-CBT offers a practical solution, especially for those facing long wait times or limited access to therapists. A recent study (October 2023–February 2024) revealed that blended AI-human therapy models reduced clinician time to just 1.6 hours per patient while achieving outcomes comparable to traditional therapy [14].

Platforms that include personalized features – such as guided sessions, adaptive self-talk, and empathic feedback – consistently outperform generic tools in reducing symptoms and keeping users engaged [1][4]. These tailored approaches and real-time adjustments are key to meeting the diverse needs of individuals seeking help.

However, challenges remain. Continuous improvements are necessary to address current limitations. Safety measures must advance to prevent issues like AI hallucinations and improve crisis detection. Regulatory standards also need to evolve to ensure consistent quality across platforms. As Dr. Jee Hang Lee from Sangmyung University observed:

"The incorporation of context-sensitive self-talk within an ACT-based DTx framework offers a promising and accessible solution for treating individuals with GAD" [4].

Tackling these challenges will shape the future of anxiety treatment. Combining AI’s efficiency with human oversight strikes a balance between addressing technical hurdles and providing emotional support. This hybrid model allows AI to handle routine monitoring and support while reserving human intervention for complex cases. As research progresses, this collaboration between technology and clinicians will continue to refine how anxiety care is delivered.

FAQs

Is AI-CBT as effective as a human therapist?

Recent research indicates that AI-powered Cognitive Behavioral Therapy (CBT) can work just as well as traditional therapy in some cases. Studies reveal that digital platforms employing evidence-based CBT methods can lead to similar short-term reductions in anxiety as sessions with human therapists. Additionally, some trials report comparable results for related issues, such as depression. While outcomes can differ, AI-driven CBT provides a scalable solution, particularly in situations where access to human therapists is limited.

Who should not use AI-CBT for anxiety?

AI-CBT isn’t suitable for people dealing with severe mental health challenges, such as those at high risk of harm, experiencing active suicidal thoughts, or managing complex co-occurring conditions. These cases typically call for more tailored, hands-on care provided by trained professionals.

How safe and private are AI-CBT chat sessions?

AI-CBT chat sessions prioritize privacy and security above all else. These conversations are usually encrypted, safeguarding confidentiality and ensuring that no human has access to them. This approach creates a protected space for therapy, giving users peace of mind about the safety of their data.